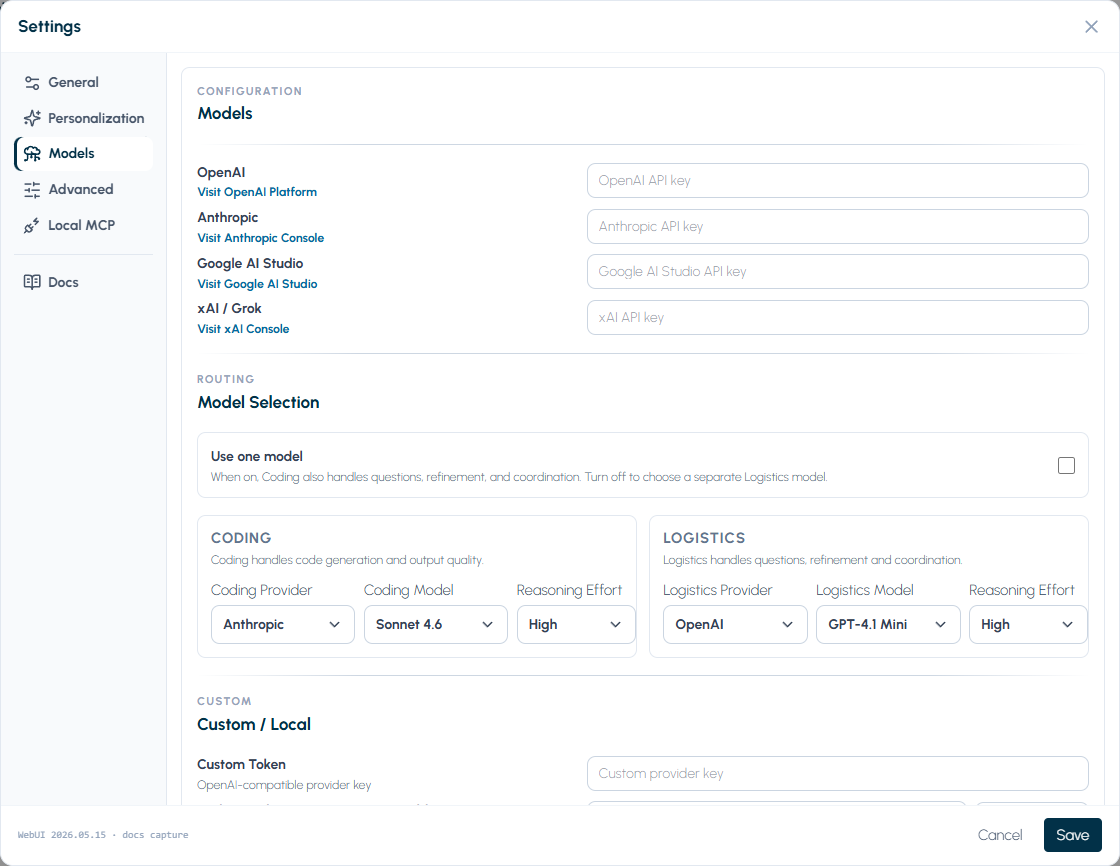

AI Providers

Use this page to choose provider roles, configure hosted provider keys, and set up Custom / Local when you want an OpenAI-compatible local or gateway endpoint.

Provider model in Genie

Section titled “Provider model in Genie”Genie separates provider settings into two roles:

- Coding: code.

- Logistics: preflight, QUERY, DATA, and ASK coordination.

You can point both roles at the same provider, or split them when you want different cost, speed, or behavior profiles.

Supported provider choices

Section titled “Supported provider choices”The Settings UI currently exposes these provider families for both roles:

| Provider | What it means in Genie | Typical use |

|---|---|---|

| Custom / Local | OpenAI-compatible endpoint with an arbitrary model id | Local models, self-hosted gateways, or compatible proxies |

| OpenAI | Hosted OpenAI account and model selection | General default for daily Genie usage |

| Anthropic | Hosted Anthropic account and model selection | Alternate hosted reasoning/coding profile |

| xAI / Grok | Hosted xAI account with Grok model selection | Grok-backed Coding or Logistics workflows |

| Hosted Google account and model selection | Alternate hosted provider path |

Bring your own keys

Section titled “Bring your own keys”Genie does not bundle model usage.

- Your Genie activation key unlocks product access.

- Your provider keys authorize the models you choose.

- Billing, quotas, and account policy for hosted models remain with the provider account you configure.

That means activation and provider credentials are separate systems.

Role-scoped settings

Section titled “Role-scoped settings”Each role can carry its own:

- provider

- model

- reasoning effort

- API key

- custom endpoint when

Custom / Localis selected

When a role uses Custom / Local, Genie also exposes:

- a free-text model id field

- an OpenAI-compatible endpoint field

- a Test Connection button

Both roles are first-class. You can run hosted providers, local providers, or a mix of both without changing how Genie presents the main workflow.

xAI / Grok behavior

Section titled “xAI / Grok behavior”xAI / Grok is a first-class hosted provider choice for both Coding and Logistics.

When a role uses xAI / Grok:

- Genie uses the separate xAI API key saved in Settings.

- The model dropdown uses Genie’s current Grok catalog.

- The default Coding model is

grok-4.20-0309-reasoning. - The default Logistics model is

grok-4.20-0309-non-reasoning. - Native requests use xAI’s OpenAI-compatible cloud API path.

- No custom endpoint field is shown; use

Custom / Localwhen you need a proxy or local OpenAI-compatible endpoint.

Web Search is not wired for xAI in this release. If you enable Web Search while xAI is selected, Genie will ask you to switch to a supported provider or turn Web Search off.

Custom / Local behavior

Section titled “Custom / Local behavior”Custom / Local is for OpenAI-compatible endpoints.

Use it when you want Genie to talk to:

- a local model server

- a self-hosted gateway

- a compatible proxy that exposes OpenAI-style request/response behavior

What you configure

Section titled “What you configure”- Model id: any model identifier your endpoint accepts

- Endpoint: per-role base URL such as

http://localhost:1234/v1 - API key: optional or required depending on your endpoint

Connection testing

Section titled “Connection testing”The Test Connection button checks endpoint reachability before you save. Use it whenever you change the endpoint or switch models/providers.

For a step-by-step local setup path, see LM Studio Setup (Custom / Local).

Choosing a provider strategy

Section titled “Choosing a provider strategy”- Use OpenAI when you want the most straightforward hosted default.

- Use xAI / Grok when your team wants Grok models for Coding or Logistics with a hosted xAI key.

- Use Custom / Local when you want local control, self-hosting, or compatibility with an existing gateway.

- Use Anthropic or Google when those providers match your team standard or preferred model behavior.

- Split Coding and Logistics only when you want different models for generation vs planning.

- Use an audio-capable Logistics provider if you want provider-backed dictation transcription; otherwise Genie can fall back to macOS speech recognition.

- For a local-model walkthrough, see LM Studio Setup (Custom / Local).

If the UI is locked but provider settings look fine

Section titled “If the UI is locked but provider settings look fine”Activation and provider access are different systems. A correct provider setup does not bypass a locked Genie UI.

Use Licensing and Activation when the interface is locked. Use API and Provider Limits when the interface is available but model calls fail.